Executive Summary

Clinical Trials in 2026 are increasingly evaluated as systems: not only whether sites were monitored, but whether quality was designed in, risks were managed proportionately, and computerized systems preserved data integrity end‑to‑end.

In practical terms, this reframes Clinical Trials leadership from “execution volume” to governance: clear accountability across sponsor, CRO, sites, and vendors; traceable decisions; and objective KPIs that trigger action rather than decorate a dashboard

Hybrid and decentralized elements are now mainstream within Clinical Trials, but only when protocols specify remote modalities, variability controls, and safety processes; the regulator frames decentralized elements as acceptable under the same regulatory requirements, provided oversight and data integrity are engineered up front.

Europe adds a distinct operational pressure: Clinical Trials submissions and maintenance are structured through a single system with defined maximum timelines and clock‑stops, plus an explicit transition requirement for trials that continue beyond 31 January 2025.

South Korea operates as a practical speed node for multinational Clinical Trials when parallel review and operational readiness are designed deliberately (regulatory and IRB clocks are described in national materials), but “fast clocks” do not compensate for weak document alignment or late vendor/system readiness.

A special stress‑test is radiopharmaceutical and theranostic Clinical Trials, where half‑life‑driven logistics, imaging standardization, and traceability make Clinical Trials performance inseparable from a tightly controlled timetable and data lineage

Governance First: What Modern GCP Now Demands of Clinical Trials

The most consequential 2026 change in Clinical Trials is not a new gadget; it is a new inspection logic. The cornerstone is the revised good clinical practice guideline adopted as ICH E6(R3) Step 4 final on 06 January 2025 by the International Council for Harmonisation (ICH), which frames sponsor accountability around quality management and risk‑proportionate control across the lifecycle of Clinical Trials.

This governance stance is tightly coupled with ICH E8(R1), which formalizes “design quality into the study” through prospective identification of critical‑to‑quality factors—those aspects most likely to threaten participant protection or result reliability if they fail

Analytical defensibility is equally front‑loaded: the estimand framework in ICH E9(R1) requires explicit alignment between objectives, intercurrent events, and missing data handling—before the first participant is enrolled—so the effect estimate in Clinical Trials is interpretable and decision‑grade

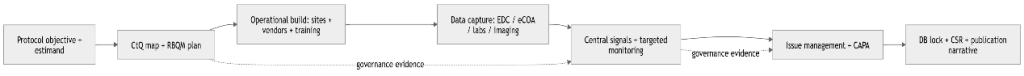

For operational teams, these principles translate into a Clinical Trials “evidence spine” that can survive publication, inspection, and internal governance review.

First, build a protocol‑specific critical‑to‑quality map and a proportional risk plan that defines (a) what could meaningfully harm participants or compromise primary results, (b) what control prevents the harm, and (c) what signal demonstrates the control is working.

Second, govern computerized systems as a single evidence chain. ICH E6(R3) implementations describe user management and access controls for computerized systems used in Clinical Trials, while U.S. electronic‑record controls for closed systems include secure, computer‑generated, time‑stamped audit trails that independently record actions that create, modify, or delete electronic records without obscuring prior information.

Third, treat vendor oversight as an auditable interface: responsibilities, escalation criteria, decision rights, and evidence of follow‑up. These “interfaces” are where inspection findings accumulate when Clinical Trials are executed across multiple service providers and technology vendors.

From Design to Global Delivery: Engineering Clinical Trials for Speed and Trust

Clinical Trials performance is often described as enrollment speed, but many high‑cost failures begin as interface failures: eligibility assumptions that do not match real patients, protocol complexity that drives missingness, and vendor workflows that fragment traceability.

A 2026‑ready operating approach treats each domain of Clinical Trials as an engineered subsystem, with explicit tolerances, owners, and measurable outputs.

Design choices in Clinical Trials should be audited back to the decision question: what effect is being estimated, under what intercurrent events, and with what operational tolerances.

This is also where cost and timeline are born. Narrow visit windows and high participant burden can inflate protocol deviations and missing data, destabilizing analysis and stretching the clinical study report cycle.

Operations in Clinical Trials are no longer synonymous with frequent on‑site monitoring. Contemporary expectations emphasize proportional oversight, with centralized signals and targeted follow‑up mapped to critical‑to‑quality factors.

Start‑up is a governance outcome. Korea‑focused guidance underscores that the start‑up clock includes feasibility, IND/IRB preparation, contracting, vendor and system setup, and site activation; “parallel review” only delivers speed when readiness work is finished in parallel.

Patient recruitment remains the most stubborn limiter of Clinical Trials timelines in many therapeutic areas; one large‑company summary reports that a high proportion of international Clinical Trials do not meet recruitment targets on schedule.

Operationally, recruitment is best managed as a measured funnel—time to first participant, screening cycle time, screen failure rate, randomization rate, withdrawals, and visit completion—linked to controllable levers (pre‑screening logic, burden reduction, site activation discipline, patient‑facing materials).

Virtual and hybrid Clinical Trials are now definitional rather than exceptional: telehealth, in‑home nursing, local healthcare providers, and remote data acquisition can be acceptable when variability is limited and processes are specified.

The regulatory insight is blunt: decentralized elements do not reduce sponsor responsibility; they multiply interfaces that must be governed (training, oversight, risk assessment, privacy, and source definition).

Data integrity is the connective tissue. Industry assessments continue to treat electronic data capture as an integral platform for the collection and management of Clinical Trials data, while ICH E6(R3) implementations explicitly address user management and access controls for computerized systems used in Clinical Trials.

At the compliance layer, teams should be able to explain why an electronic record is trustworthy: closed‑system controls, access limitation, and audit trails that record who changed what and when.

At the interoperability layer, the operational risk is reconciliation latency (EDC ↔ lab ↔ imaging ↔ safety), because late or inconsistent reconciliation pushes database lock, analysis readiness, and downstream submission milestones.

In 2026, “risk management” in Clinical Trials is not a slide; it is a closed loop: identify risks, implement controls, monitor signals, and document actions.

A high‑yield discipline is to predefine “red flags” that mandate escalation (e.g., recurring critical deviations, audit‑trail anomalies, systemic site performance drift, protocol amendment pressure), and to connect each red flag to a pre‑agreed action

Radiopharmaceutical and theranostic Clinical Trials amplify what is already true for all Clinical Trials: if scheduling slips, quality and data slip. The attached execution note highlights five schedule‑to‑quality failure modes—(1) supply delays that collapse dosing/visit windows, (2) delayed site activation prolonging start‑up, (3) complex dosing‑day workflows driving documentation and timestamp errors, (4) missing linkage between imaging, dosing, and manufacturing records weakening traceability, and (5) slow reconciliation across EDC/imaging/safety pushing database lock and analysis timelines. The same note proposes seven pre‑FPI questions that operationalize CtQ thinking for these Clinical Trials: whether the site has nuclear medicine and radiation‑safety capability; how supply, import, and labeling are managed; whether dosing‑day patient flow is defined; how imaging read standards and QC are governed; who owns EDC–imaging–safety reconciliation and how often; what rescheduling logic applies when supply slips; and which early‑warning KPIs catch delays, errors, and data mismatches before they become systemic.

The broader radiotheranostics literature converges on the same constraints: short half‑lives demand rapid logistics, global supply chains can be vulnerable due to limited production capacity, and sustainable access requires resilient governance across the supply chain.

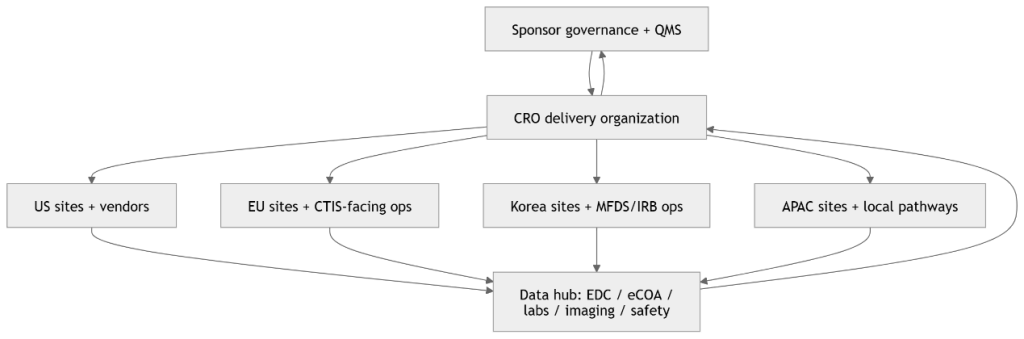

Clinical Trials are rarely “fully outsourced” in the accountability sense; the sponsor remains accountable while the CRO industrializes execution.

In practical contracting, demand that Clinical Trials responsibilities are explicit and testable: who owns each system, who validates, who reviews audit trails, who performs reconciliation, who decides on protocol deviations, and what KPI thresholds trigger CAPA.

For additional start‑up clocks, Korea execution checklists, and CRO governance notes updated through 2026, see https://intoinworld.com/industry-insights

Regional Reality Check: US, EU, Korea, and APAC

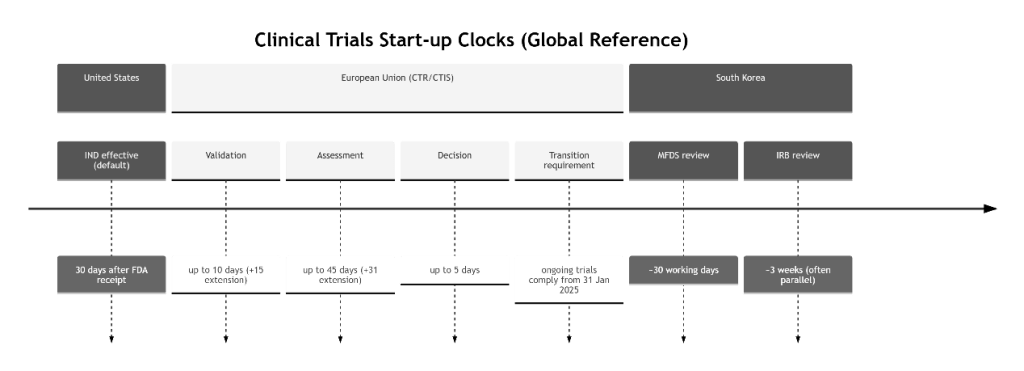

Clinical Trials strategy becomes credible when it is written as a timeline with gates, not as a narrative with aspirations. Review clocks are only part of the calendar; contracts, translations, vendor onboarding, and site activation are often the true critical path.

In the United States, an IND generally goes into effect 30 days after the entity[“organization”,”U.S. Food and Drug Administration”,”us drug regulator”] receives it (unless a clinical hold is imposed or earlier notification is provided).

For hybrid Clinical Trials, decentralized‑elements guidance emphasizes that remote modalities should be specified and controlled, with training, oversight, and continuing risk assessment as core success factors.

In the European Union, the Clinical Trials Regulation enables a single application via CTIS and collaborative assessment by member states, and the transition period requires that trials authorized under the prior directive that continue running from 31 January 2025 comply with the regulation and have information recorded in CTIS.

The European Medicines Agency describes CTIS as the single online system supporting these interactions, and the EU’s practical guide describes defined maximum timelines for validation, assessment, and decision (with clock‑stops for RFIs and special extensions for certain product types), making document consistency and change control highly visible in Clinical Trials.

Operationally, the European Commission emphasizes that CTIS became the single entry point on 31 January 2023, reinforcing that portal discipline is not optional for multinational Clinical Trials.

In South Korea,, national materials emphasize two planning facts for Clinical Trials: the MFDS review benchmark is 30 working days, and IRB review often proceeds in parallel, with typical IRB timelines described in weeks.

Practically, Ministry of Food and Drug Safety (MFDS) speed is maximized when the dossier is aligned and site/vendor readiness is real; Korea‑specific guidance warns that misalignment between protocol and supporting documents increases review questions and extends timelines.

For many global sponsors, major tertiary centers in Seoul—including Asan Medical Center and Seoul National University Hospital—combine high patient throughput with mature electronic medical record ecosystems, which can strengthen feasibility and accelerate Clinical Trials when operational interfaces are well controlled

Within APAC, regulatory pathways remain heterogeneous. In China, the National Medical Products Administration describes a 60‑day decision window for drug clinical trial applications, with a “deemed approved” mechanism when no notice is issued within the timeline.

The same regulator has also communicated a 30‑day clinical trial review and approval pathway for defined categories, illustrating that APAC “speed channels” are increasingly policy‑driven and indication‑dependent.

In Japan, Pharmaceuticals and Medical Devices Agency”,”japan drug regulator”] materials describe a pre‑start notification window in which initial clinical trial notifications for trial drugs are submitted more than 31 days before the planned start, reinforcing the need for disciplined pre‑start localization and planning in Clinical Trials.

Finally, Australia often functions as a complementary hub in APAC radiopharmaceutical Clinical Trials where logistics and capacity planning benefit from geographic redundancy, especially when isotopes and delivery schedules are time‑critical.

The strategic implication is stable across regions: hold the scientific question and data‑governance standard constant, and adapt the operational interface (workflows, language, logistics, and local documentation) without fragmenting the evidence story in Clinical Trials.

Tables

The tables below translate modern expectations for Clinical Trials (quality by design, risk‑based quality management, decentralized elements governance, and region‑specific start‑up clocks) into practical planning artifacts. They synthesize ICH E6(R3)/E8(R1)/E9(R1), FDA decentralized‑elements guidance, the EU CTR/CTIS operating framework, KoNECT’s timeline summaries, and 2026 CRO operating notes published on Intoinworld

Table 1. Operating‑model trade‑offs in Clinical Trials (cost, timeline, risk)

| Operating model | Best‑fit use case | Timeline accelerators | Hidden cost drivers | Primary CtQ risks | Minimum governance controls to pre‑specify |

| Site‑centric | Procedures requiring site infrastructure (infusion, complex imaging, specialized safety monitoring) | Fewer external handoffs; simpler vendor topology | Site burden; travel burden for participants; monitoring intensity | Missed visits and dropouts; site‑to‑site endpoint variability; slower detection of systemic issues | CtQ map; RBQM signals; standardized endpoint training; TMF completeness rules; fixed reconciliation cadence |

| Hybrid | Most registrational trajectories; complex designs needing both integrity and broad access | Remote follow‑ups; flexible scheduling; larger catchment | Duplication if responsibilities unclear (site vs local HCP vs vendor); DHT logistics; training overhead | Source fragmentation; inconsistent remote documentation; variable home procedures | Protocol specifies remote vs onsite; telehealth visit traceability; role/delegation matrix; audit‑trail review plan; centralized monitoring rules and triggers |

| Decentralized‑heavy | Low‑risk interventions or stable IPs; long follow‑up with high patient burden; supplemental endpoints | Reduced travel; potential retention benefits | High coordination costs; tech support; higher governance/change‑control load | Increased variability; privacy failures; uncontrolled software/device versioning | Explicit role ownership; DHT validation scope and versioning; privacy procedure; audit‑trail governance; predefined “rescue” workflows when remote execution fails |

Table 2. Evidence spine for inspection‑resilient Clinical Trials (process + ownership)

| Process block | Outputs to lock before scaling | Typical primary owner | Evidence to preserve for inspection/publication | Failure signal to monitor |

| Protocol intent & estimand alignment | Estimand statement; intercurrent‑event strategy; endpoint tolerances and windows | Sponsor + Biostatistics (with CRO input) | Decision rationale; version control; analysis assumptions traceable to protocol | Amendment pressure; endpoint ambiguity; rising missingness risk |

| CtQ & RBQM design | CtQ map; risk register; thresholds and triggers; escalation paths | Sponsor (accountable) + CRO (operational design) | Risk review cadence; action logs; CAPA linkage | Repeated critical deviations; unresolved central signals; recurring root causes |

| Start‑up readiness | Aligned country packages; translation glossary; site activation checklist; vendor plan | CRO start‑up lead + Sponsor oversight | Submission alignment sheet; training completion; system‑access controls | Contract/budget churn; repeated translation revisions; vendor onboarding drag |

| Data + system governance | Data mgmt plan; edit checks; reconciliation plan; audit‑trail plan; change control plan | CRO Data Mgmt + Sponsor QMS | Validation status overview; user roles/access; audit‑trail review records | Query aging; reconciliation lag; unexplained system changes |

| Conduct & oversight | Monitoring plan; centralized monitoring signals; issue mgmt SOPs | CRO ClinOps + Sponsor medical oversight | Delegation logs; visit‑modality traceability; issue→CAPA evidence | Slow issue closure; recurrence after CAPA |

| Closeout & reporting | DB lock criteria; CSR narrative controls; TMF QC closeout | Sponsor + CRO (writing/ops) | Lock checklist; data lineage; narrative consistency checks | Post‑lock rework; missing essential documents |

Table 3. KPI dashboard for Clinical Trials (KPI → trigger → action)

| KPI domain | KPI (example) | Operational definition | Trigger for action (illustrative) | First-line action that proves control |

| Speed | Site activation cycle time | Final package → site activated | Outliers vs site cohort; repeated stoppage from contracting | Fix contract template bottleneck; tighten readiness checklist; re‑baseline critical path |

| Recruitment | Time to first participant | Site activated → first participant | “Silent site” period beyond plan | Activation rescue visit; PI/CRC workflow check; update recruitment materials |

| Recruitment quality | Screen failure rate | Screened / randomized | Unexplained drift after protocol change | Re‑train I/E interpretation; adjust pre‑screen; reduce visit burden where possible |

| Data quality | CtQ missing data rate | Missing CtQ fields at visits | Rising trend at a site/modality | Targeted monitoring; workflow retraining; strengthen edit checks and reminders |

| Data speed | Query cycle time | Query open → resolved | Aging beyond predefined limit | Site coaching; enforce query discipline; automate escalation |

| Safety | SAE follow‑up timeliness | Initial SAE → complete follow‑up | Repeated late follow‑ups | Reinforce escalation; reconcile safety vs EDC weekly; adjust staffing |

| System governance | Audit‑trail exceptions | Unexplained configuration/user actions | Any unexplained admin change (high criticality) | Freeze config; investigate; CAPA; tighten access model and periodic review |

| Integration | Reconciliation lag | Lab/image/safety vs EDC | Lag threatens interim lock or DB lock | Increase cadence; repair interface; assign single reconciliation owner |

Table 4. Region-by-region friction points in Clinical Trials (what changes, what must not)

| Region | Primary gate | Publicly described planning clock | What commonly slows the real path | Planning response for global teams |

| United States | IND effective + IRB | IND effective at 30 days unless clinical hold | Contracts, site workload, vendor setup | Build day‑0 package; pre‑plan oversight and data workflows |

| European Union | CTA via CTIS | Defined maximum periods for validation/assessment/decision; clock‑stops for RFIs | Dossier inconsistency across Part I/II; change control; CTIS data discipline | Pre‑align documents; treat CTIS metadata as first‑class deliverables |

| South Korea | MFDS + IRB (often parallel) | MFDS ~30 working days; IRB ~weeks, often parallel | Document misalignment, translations, vendor validation, IP import/labeling | “First‑submission quality”; parallelize only if readiness is real |

| China | NMPA/CDE pathway | 60‑day decision window with “deemed approved” if no notice | Local documentation, ethics cadence, Q&A cycles | Keep scientific definitions constant; localize workflows/language |

| Japan | CTN pre‑start window | Initial CTN submitted >31 days prior | Local language/docs; consultation cadence | Plan localization early; lock protocol intent and tolerances |

Figure

Figure 1 (Process diagram): Clinical Trials evidence spine from protocol intent to CSR

Figure 2 (Clinical Trials stage flow + timeline): Region-aware start-up clocks that shape first patient in

Figure 3 (Global CRO network map): Multi-region Clinical Trials execution as a controlled network