Abstract

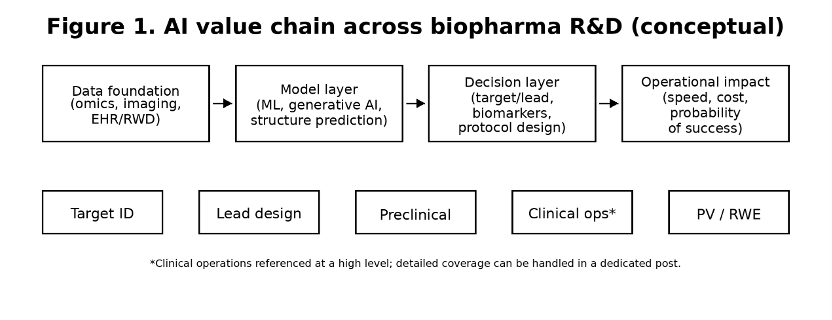

AI adoption in biopharma has moved from pilot projects to measurable operational impact. In 2026, the competitive difference is less about who has the largest model and more about who can demonstrate model credibility for a defined context of use (COU), validate performance on held-out data, and maintain ALCOA+-aligned data integrity, traceability, and lifecycle controls across R&D.

This briefing summarizes where AI is creating real leverage, how operating models are changing, what regulators increasingly expect for credibility, and how sponsors can operationalize AI with CRO partners without losing auditability, reproducibility, or regulatory defensibility.

1. What changed: from experiments-first to model-informed R&D

Definition (2026 view)

Model-informed R&D refers to decision-making processes where AI outputs are treated as regulated evidence inputs, requiring prospective validation, documented limitations, and defined human oversight, rather than exploratory insights.

A practical shift is underway: teams are no longer asking whether AI can generate insights, but whether those insights survive prospective testing on held-out cohorts and improve real decision quality. The strongest early gains appear where decisions are repetitive, data-rich, and historically slow—target triage, hit enrichment, biomarker discovery, and document-heavy evidence workflows.

The operational lesson is blunt: speed is not the limiting factor. Validation and governance are. If a model cannot be tested prospectively, explained well enough to be audited, or reproduced across versions, its value collapses the moment it influences a regulated decision.

| Decision | AI approach | Practical validation signal | Common pitfall |

| Target prioritization | ML on multi-omics + NLP | Prospective test on held-out cohorts | Leakage / cohort bias |

| Hit-to-lead | In silico screening; generative design | Wet-lab confirmation rate (enrichment) | Novelty without developability |

| Biomarkers | Clustering; representation learning | Reproducible across sites/platforms | Batch effects mistaken as biology |

| Evidence workflows | Document intelligence; analytics assist | Traceable lineage + audit readiness | Untracked model drift |

2. Industrial impact: platforms, data assets, and new collaboration patterns

As AI becomes embedded, the boundary between a traditional sponsor and a technology company continues to blur. Large pharma increasingly builds or acquires AI capabilities for long-term differentiation, while AI-native biotechs monetize platforms by running multiple pipelines in parallel.

Across organizations, data is now treated as a regulated asset class: provenance, interoperability, access control, and information security (e.g., GDPR- and HIPAA-aligned de-identification) matter as much as wet-lab throughput.

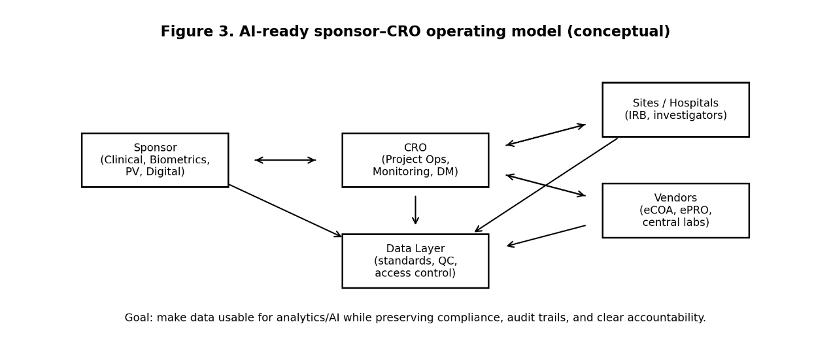

For CROs, the implication is clear: sponsors increasingly expect AI to be operationalized inside regulated workflows, not delivered as isolated tools. This includes SOP alignment, audit-ready documentation, vendor oversight, and clearly defined responsibility (RACI) across sponsors, CROs, sites, and data owners.

| Model | Best fit | Advantage | Risk to manage |

| Build | Core IP / long-term differentiation | Full control | Talent demand + longer ramp |

| Buy | Standardizable workflows | Fast rollout | Opacity / vendor lock-in |

| Partner | Need domain + local execution | Shared risk & speed | Accountability gaps without governance |

3. Regulatory reality: credibility and lifecycle controls are now explicit

Regulatory focus has converged on a single question:

For a defined context of use, is the model credible?

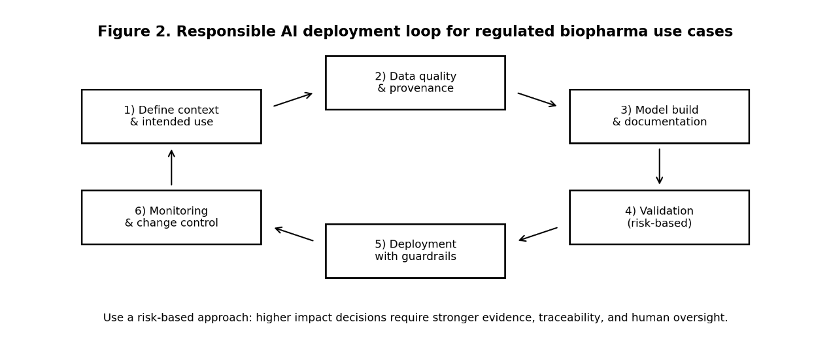

In regulated biopharma, credibility is not a claim—it is evidence. Regulators increasingly expect risk-based validation aligned with Good Machine Learning Practice (GMLP), supported by documentation, traceability, and lifecycle controls such as versioning, monitoring, and formal change control.

When AI influences decisions that can materially affect patient safety, endpoints, or regulatory submissions, organizations must demonstrate:

- Reproducibility of outputs

- Clear documentation of limitations

- Defined human oversight and approval

- Controlled change management across versions

| Risk | Example | Evidence package | Governance |

| Low | Drafting/summarization tools | Human review + basic accuracy checks | Access control; prevent PHI leakage |

| Medium | Feasibility or site scoring analytics | Benchmark vs baseline; bias checks | Versioning; monitoring |

| High | AI-generated evidence affecting submissions | COU validation; traceability; stress tests | Change control; independent review |

4. Clinical development touchpoints (high level)

Even when your primary focus is discovery and evidence generation, clinical development is where AI governance becomes real. Large-scale data scanning for eligible participants, analytics-assisted monitoring, and participant support tools can shorten timelines and reduce burden—but only if data protection and accountability are designed upfront.

For Korea execution context, connect this AI briefing to two resources from our Clinical Trial Information hub:

5. Sponsor–CRO playbook for 2026 (minimum viable steps)

- Write the context of use (COU): what decision changes if the model is correct, and what is the acceptable error?

- Engineer the data layer first: standards, QC, provenance, access control, and audit trails.

- Validate by risk: choose evidence strength based on potential impact (Table 3).

- Design human oversight: define who approves, who can override, and how decisions are logged.

- Operationalize with your CRO: embed governance into SOPs, vendor management, monitoring plans, and reporting.

- Monitor and manage change: detect drift, trigger re-validation, and maintain version traceability.

FAQ

Q1 What is the fastest first AI win for a biopharma team?

A1 Pick one data-rich bottleneck (e.g., target triage or hit enrichment) and build a tight validation loop that includes wet-lab confirmation.

Q2 How should sponsors think about regulator expectations for AI?

A2 Start from the context of use and prepare a risk-based credibility package: validation, documentation, traceability, and lifecycle controls.

Q3 What should be documented for AI in regulated workflows?

A3 Data provenance, model versioning, validation results, human oversight rules, and change-control triggers.

Q4 Where do AI programs fail most often in biopharma?

A4 Data quality and generalizability: site-to-site variation, biased cohorts, and silent drift after deployment.

Q5 How can a CRO help beyond execution when AI is involved?

A5 By integrating governance into operational reality: QC gates, audit-ready records, and aligned responsibilities across sponsors, sites, and vendors.

For further information on our CRO services, please feel free to contact our BD team by phone at +82-2-515-8082 or via email at bd@intoinworld.kr